dribnet/pixray-text2image ❓📝 → 🖼️

About

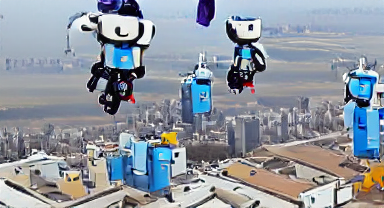

Uses pixray to generate an image from text prompt

Example Output

Output

[object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object][object Object]

Performance Metrics

250.60s

Prediction Time

251.82s

Total Time

All Input Parameters

{

"aspect": "widescreen",

"prompts": "Robots skydiving high above the city",

"quality": "normal"

}

Input Parameters

- drawer

- render engine

- prompts

- text prompt

- settings

- extra settings in `name: value` format. reference: https://dazhizhong.gitbook.io/pixray-docs/docs/primary-settings

Output Schema

Output

Example Execution Logs

---> BasePixrayPredictor Predict Using seed: 7848817447306549499 Working with z of shape (1, 256, 16, 16) = 65536 dimensions. loaded pretrained LPIPS loss from taming/modules/autoencoder/lpips/vgg.pth VQLPIPSWithDiscriminator running with hinge loss. Restored from models/vqgan_imagenet_f16_16384.ckpt Using device: cuda:0 Optimising using: Adam Using text prompts: ['Robots skydiving high above the city'] 0it [00:00, ?it/s] /root/.pyenv/versions/3.8.12/lib/python3.8/site-packages/torch/nn/functional.py:3609: UserWarning: Default upsampling behavior when mode=bilinear is changed to align_corners=False since 0.4.0. Please specify align_corners=True if the old behavior is desired. See the documentation of nn.Upsample for details. warnings.warn( iter: 0, loss: 2, losses: 0.965, 0.0457, 0.943, 0.0472 (-0=>2.001) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 10, loss: 1.81, losses: 0.862, 0.0465, 0.852, 0.046 (-0=>1.807) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 20, loss: 1.69, losses: 0.806, 0.052, 0.785, 0.0511 (-0=>1.694) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 30, loss: 1.66, losses: 0.782, 0.0555, 0.77, 0.0534 (-6=>1.655) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 40, loss: 1.62, losses: 0.764, 0.056, 0.748, 0.0536 (-2=>1.586) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 50, loss: 1.64, losses: 0.779, 0.0543, 0.755, 0.053 (-8=>1.583) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 60, loss: 1.6, losses: 0.759, 0.056, 0.733, 0.0546 (-4=>1.558) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 70, loss: 1.55, losses: 0.729, 0.0566, 0.706, 0.0551 (-0=>1.547) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 80, loss: 1.55, losses: 0.731, 0.0557, 0.705, 0.0539 (-0=>1.546) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 90, loss: 1.59, losses: 0.754, 0.0568, 0.723, 0.0555 (-6=>1.535) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 100, loss: 1.57, losses: 0.749, 0.057, 0.709, 0.0565 (-2=>1.532) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 110, loss: 1.57, losses: 0.744, 0.0581, 0.708, 0.057 (-2=>1.522) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 120, loss: 1.57, losses: 0.741, 0.0591, 0.709, 0.0576 (-12=>1.522) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 130, loss: 1.52, losses: 0.716, 0.0608, 0.682, 0.0593 (-4=>1.515) 0it [00:00, ?it/s] 0it [00:08, ?it/s] 0it [00:00, ?it/s] iter: 140, loss: 1.57, losses: 0.744, 0.0588, 0.71, 0.0593 (-14=>1.515) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 150, loss: 1.55, losses: 0.733, 0.0606, 0.698, 0.0588 (-24=>1.515) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 160, loss: 1.49, losses: 0.7, 0.0611, 0.668, 0.061 (-0=>1.491) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 170, loss: 1.53, losses: 0.719, 0.0616, 0.691, 0.0592 (-10=>1.491) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 180, loss: 1.55, losses: 0.728, 0.0591, 0.702, 0.0591 (-20=>1.491) 0it [00:00, ?it/s] Dropping learning rate 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 190, loss: 1.55, losses: 0.725, 0.062, 0.7, 0.0606 (-2=>1.503) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 200, loss: 1.53, losses: 0.721, 0.0601, 0.694, 0.0592 (-3=>1.495) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 210, loss: 1.48, losses: 0.695, 0.0616, 0.666, 0.0614 (-0=>1.484) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 220, loss: 1.54, losses: 0.723, 0.0609, 0.693, 0.0603 (-6=>1.482) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 230, loss: 1.49, losses: 0.697, 0.062, 0.665, 0.0624 (-16=>1.482) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 240, loss: 1.53, losses: 0.717, 0.0617, 0.692, 0.0604 (-26=>1.482) 0it [00:00, ?it/s] 0it [00:09, ?it/s] 0it [00:00, ?it/s] iter: 250, finished (-2=>1.481) 0it [00:00, ?it/s] 0it [00:00, ?it/s]

Version Details

- Version ID

50f96fcd1980e7dcaba18e757acbac05e7f2ad4fbcb4a75f86a13c4086df26d0- Version Created

- October 27, 2022