lucataco/realvisxl2-lora-inference 🖼️🔢📝❓✓ → 🖼️

About

POC to run inference on Realvisxl2 LoRAs

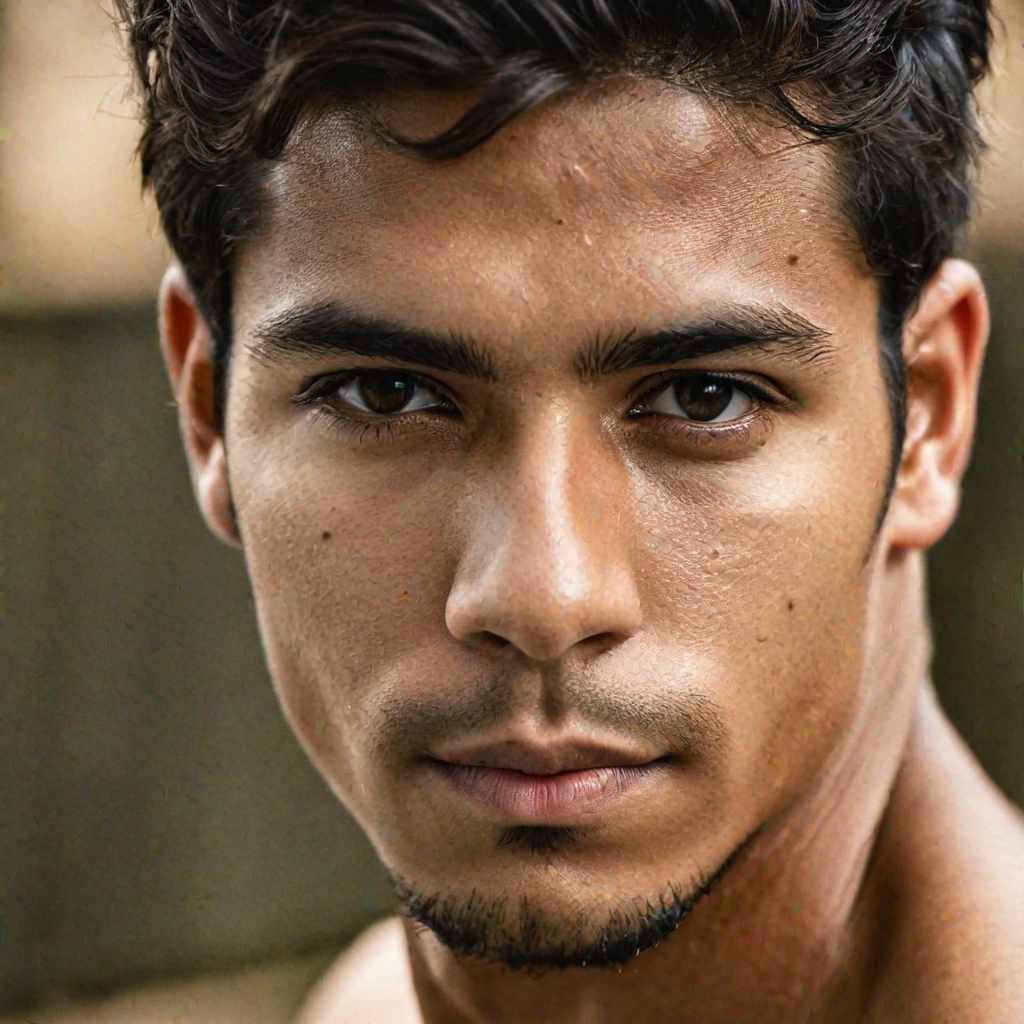

Example Output

Prompt:

"A photo of TOK"

Output

Performance Metrics

25.24s

Prediction Time

115.75s

Total Time

All Input Parameters

{

"seed": 6995,

"width": 1024,

"height": 1024,

"prompt": "A photo of TOK",

"refine": "no_refiner",

"lora_url": "https://replicate.delivery/pbxt/L5zHkM0OHX4ZF1Ipnaiok6GHGvrRgZHBqbz2JjtBAtWz8mdE/trained_model.tar",

"scheduler": "DPMSolverMultistep",

"lora_scale": 0.6,

"num_outputs": 1,

"guidance_scale": 7.5,

"apply_watermark": true,

"high_noise_frac": 0.8,

"negative_prompt": "(worst quality, low quality, illustration, 3d, 2d, painting, cartoons, sketch), open mouth",

"prompt_strength": 0.8,

"num_inference_steps": 50

}

Input Parameters

- mask

- Input mask for inpaint mode. Black areas will be preserved, white areas will be inpainted.

- seed

- Random seed. Leave blank to randomize the seed

- image

- Input image for img2img or inpaint mode

- width

- Width of output image

- height

- Height of output image

- prompt

- Input prompt

- refine

- Which refine style to use

- lora_url (required)

- Load Lora model

- scheduler

- scheduler

- lora_scale

- LoRA additive scale. Only applicable on trained models.

- num_outputs

- Number of images to output.

- refine_steps

- For base_image_refiner, the number of steps to refine, defaults to num_inference_steps

- guidance_scale

- Scale for classifier-free guidance

- apply_watermark

- Applies a watermark to enable determining if an image is generated in downstream applications. If you have other provisions for generating or deploying images safely, you can use this to disable watermarking.

- high_noise_frac

- For expert_ensemble_refiner, the fraction of noise to use

- negative_prompt

- Input Negative Prompt

- prompt_strength

- Prompt strength when using img2img / inpaint. 1.0 corresponds to full destruction of information in image

- num_inference_steps

- Number of denoising steps

Output Schema

Output

Example Execution Logs

LORA

Loading ssd txt2img pipeline...

Loading pipeline components...: 0%| | 0/7 [00:00<?, ?it/s]

Loading pipeline components...: 29%|██▊ | 2/7 [00:00<00:00, 18.02it/s]

Loading pipeline components...: 57%|█████▋ | 4/7 [00:00<00:00, 12.25it/s]

Loading pipeline components...: 86%|████████▌ | 6/7 [00:00<00:00, 6.93it/s]

Loading pipeline components...: 100%|██████████| 7/7 [00:01<00:00, 5.83it/s]

Loading pipeline components...: 100%|██████████| 7/7 [00:01<00:00, 6.97it/s]

Loading ssd lora weights...

Loading fine-tuned model

Does not have Unet. Assume we are using LoRA

Loading Unet LoRA

Using seed: 6995

Prompt: A photo of <s0><s1>

txt2img mode

0%| | 0/50 [00:00<?, ?it/s]/root/.pyenv/versions/3.11.6/lib/python3.11/site-packages/diffusers/models/attention_processor.py:1815: FutureWarning: `LoRAAttnProcessor2_0` is deprecated and will be removed in version 0.26.0. Make sure use AttnProcessor2_0 instead by settingLoRA layers to `self.{to_q,to_k,to_v,to_out[0]}.lora_layer` respectively. This will be done automatically when using `LoraLoaderMixin.load_lora_weights`

deprecate(

2%|▏ | 1/50 [00:00<00:18, 2.59it/s]

4%|▍ | 2/50 [00:00<00:13, 3.65it/s]

6%|▌ | 3/50 [00:00<00:12, 3.68it/s]

8%|▊ | 4/50 [00:01<00:12, 3.70it/s]

10%|█ | 5/50 [00:01<00:12, 3.70it/s]

12%|█▏ | 6/50 [00:01<00:11, 3.70it/s]

14%|█▍ | 7/50 [00:01<00:11, 3.71it/s]

16%|█▌ | 8/50 [00:02<00:11, 3.71it/s]

18%|█▊ | 9/50 [00:02<00:11, 3.71it/s]

20%|██ | 10/50 [00:02<00:10, 3.70it/s]

22%|██▏ | 11/50 [00:03<00:10, 3.71it/s]

24%|██▍ | 12/50 [00:03<00:10, 3.70it/s]

26%|██▌ | 13/50 [00:03<00:09, 3.71it/s]

28%|██▊ | 14/50 [00:03<00:09, 3.71it/s]

30%|███ | 15/50 [00:04<00:09, 3.71it/s]

32%|███▏ | 16/50 [00:04<00:09, 3.70it/s]

34%|███▍ | 17/50 [00:04<00:08, 3.70it/s]

36%|███▌ | 18/50 [00:04<00:08, 3.70it/s]

38%|███▊ | 19/50 [00:05<00:08, 3.70it/s]

40%|████ | 20/50 [00:05<00:08, 3.70it/s]

42%|████▏ | 21/50 [00:05<00:07, 3.70it/s]

44%|████▍ | 22/50 [00:05<00:07, 3.70it/s]

46%|████▌ | 23/50 [00:06<00:07, 3.70it/s]

48%|████▊ | 24/50 [00:06<00:07, 3.70it/s]

50%|█████ | 25/50 [00:06<00:06, 3.70it/s]

52%|█████▏ | 26/50 [00:07<00:06, 3.70it/s]

54%|█████▍ | 27/50 [00:07<00:06, 3.70it/s]

56%|█████▌ | 28/50 [00:07<00:05, 3.70it/s]

58%|█████▊ | 29/50 [00:07<00:05, 3.70it/s]

60%|██████ | 30/50 [00:08<00:05, 3.70it/s]

62%|██████▏ | 31/50 [00:08<00:05, 3.70it/s]

64%|██████▍ | 32/50 [00:08<00:04, 3.70it/s]

66%|██████▌ | 33/50 [00:08<00:04, 3.70it/s]

68%|██████▊ | 34/50 [00:09<00:04, 3.70it/s]

70%|███████ | 35/50 [00:09<00:04, 3.69it/s]

72%|███████▏ | 36/50 [00:09<00:03, 3.69it/s]

74%|███████▍ | 37/50 [00:10<00:03, 3.69it/s]

76%|███████▌ | 38/50 [00:10<00:03, 3.69it/s]

78%|███████▊ | 39/50 [00:10<00:02, 3.69it/s]

80%|████████ | 40/50 [00:10<00:02, 3.69it/s]

82%|████████▏ | 41/50 [00:11<00:02, 3.69it/s]

84%|████████▍ | 42/50 [00:11<00:02, 3.69it/s]

86%|████████▌ | 43/50 [00:11<00:01, 3.69it/s]

88%|████████▊ | 44/50 [00:11<00:01, 3.69it/s]

90%|█████████ | 45/50 [00:12<00:01, 3.69it/s]

92%|█████████▏| 46/50 [00:12<00:01, 3.68it/s]

94%|█████████▍| 47/50 [00:12<00:00, 3.68it/s]

96%|█████████▌| 48/50 [00:13<00:00, 3.68it/s]

98%|█████████▊| 49/50 [00:13<00:00, 3.68it/s]

100%|██████████| 50/50 [00:13<00:00, 3.68it/s]

100%|██████████| 50/50 [00:13<00:00, 3.69it/s]

Version Details

- Version ID

9b5a0c77cd4f6bdb53a2c3d05b4774df02876d21dd7d37f13f518c03e996945b- Version Created

- November 8, 2023