tarot-cards/transamerica-pyramid 🖼️🔢❓📝✓ → 🖼️

About

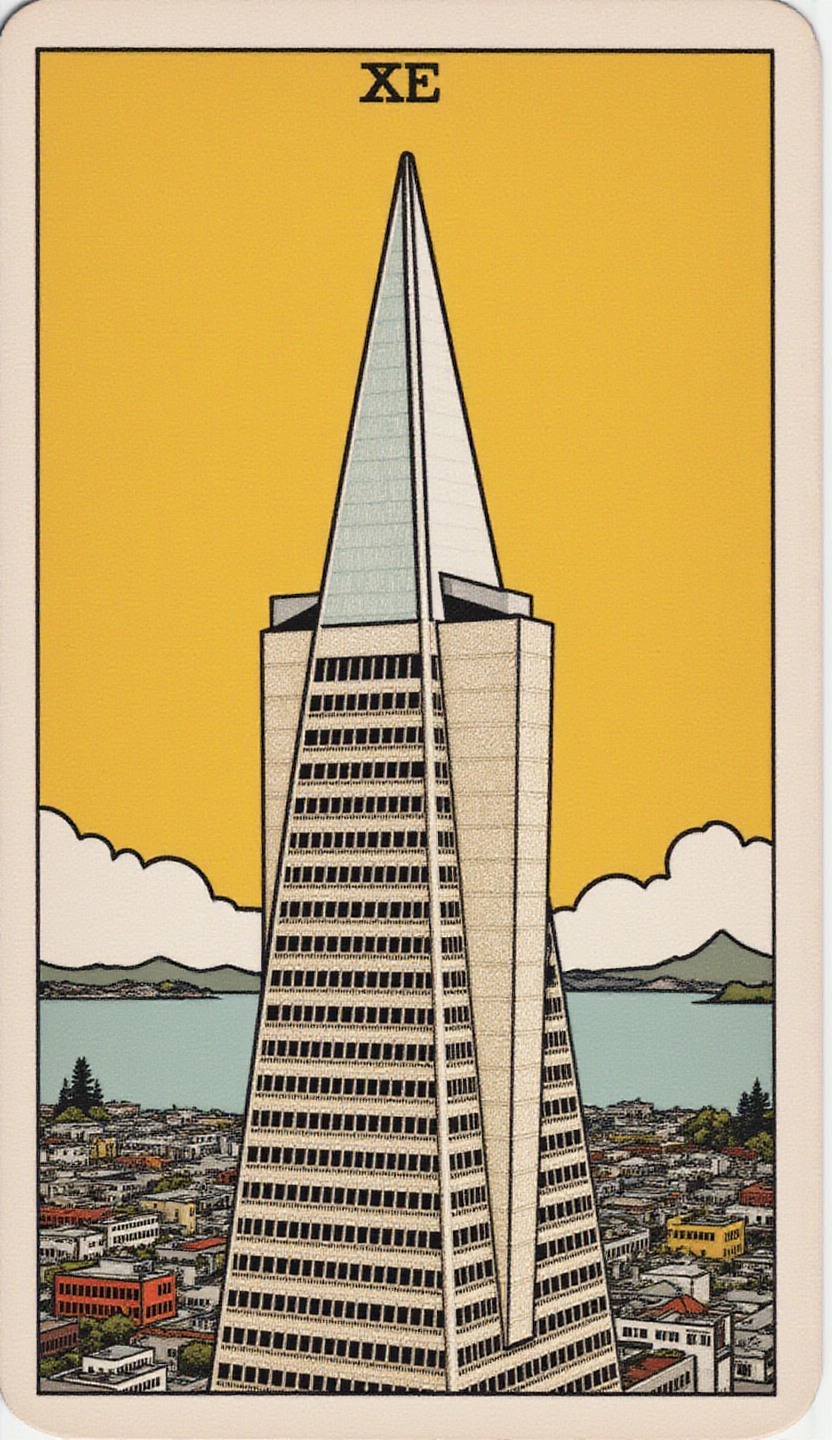

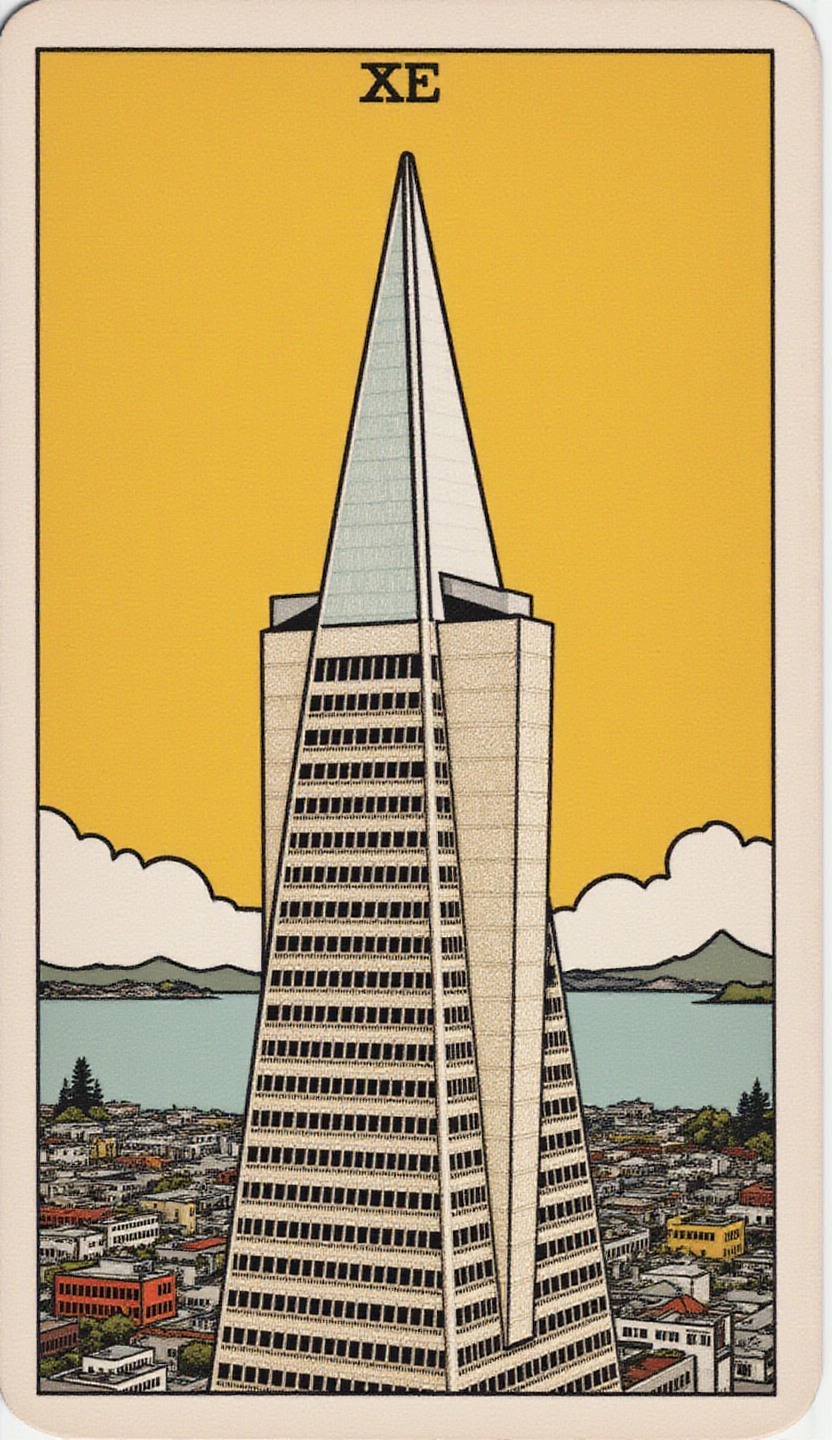

Flux fine-tune of the Transamerica Pyramid building in San Francisco

Example Output

Prompt:

"The zeke/transamerica-pyramid Transamerica building in San Francisco. "the emperor" in the style of TOK a trtcrd, tarot style"

Output

Performance Metrics

9.39s

Prediction Time

9.42s

Total Time

All Input Parameters

{

"model": "dev",

"width": 832,

"height": 1440,

"prompt": "The zeke/transamerica-pyramid Transamerica building in San Francisco. \"the emperor\" in the style of TOK a trtcrd, tarot style",

"go_fast": false,

"extra_lora": "apolinario/flux-tarot-v1",

"lora_scale": 1,

"megapixels": "1",

"num_outputs": 1,

"aspect_ratio": "custom",

"output_format": "webp",

"guidance_scale": 3.5,

"output_quality": 100,

"prompt_strength": 0.8,

"extra_lora_scale": 1,

"num_inference_steps": 28

}

Input Parameters

- mask

- Image mask for image inpainting mode. If provided, aspect_ratio, width, and height inputs are ignored.

- seed

- Random seed. Set for reproducible generation

- image

- Input image for image to image or inpainting mode. If provided, aspect_ratio, width, and height inputs are ignored.

- model

- Which model to run inference with. The dev model performs best with around 28 inference steps but the schnell model only needs 4 steps.

- width

- Width of generated image. Only works if `aspect_ratio` is set to custom. Will be rounded to nearest multiple of 16. Incompatible with fast generation

- height

- Height of generated image. Only works if `aspect_ratio` is set to custom. Will be rounded to nearest multiple of 16. Incompatible with fast generation

- prompt (required)

- Prompt for generated image. If you include the `trigger_word` used in the training process you are more likely to activate the trained object, style, or concept in the resulting image.

- go_fast

- Run faster predictions with model optimized for speed (currently fp8 quantized); disable to run in original bf16

- extra_lora

- Load LoRA weights. Supports Replicate models in the format <owner>/<username> or <owner>/<username>/<version>, HuggingFace URLs in the format huggingface.co/<owner>/<model-name>, CivitAI URLs in the format civitai.com/models/<id>[/<model-name>], or arbitrary .safetensors URLs from the Internet. For example, 'fofr/flux-pixar-cars'

- lora_scale

- Determines how strongly the main LoRA should be applied. Sane results between 0 and 1 for base inference. For go_fast we apply a 1.5x multiplier to this value; we've generally seen good performance when scaling the base value by that amount. You may still need to experiment to find the best value for your particular lora.

- megapixels

- Approximate number of megapixels for generated image

- num_outputs

- Number of outputs to generate

- aspect_ratio

- Aspect ratio for the generated image. If custom is selected, uses height and width below & will run in bf16 mode

- output_format

- Format of the output images

- guidance_scale

- Guidance scale for the diffusion process. Lower values can give more realistic images. Good values to try are 2, 2.5, 3 and 3.5

- output_quality

- Quality when saving the output images, from 0 to 100. 100 is best quality, 0 is lowest quality. Not relevant for .png outputs

- prompt_strength

- Prompt strength when using img2img. 1.0 corresponds to full destruction of information in image

- extra_lora_scale

- Determines how strongly the extra LoRA should be applied. Sane results between 0 and 1 for base inference. For go_fast we apply a 1.5x multiplier to this value; we've generally seen good performance when scaling the base value by that amount. You may still need to experiment to find the best value for your particular lora.

- replicate_weights

- Load LoRA weights. Supports Replicate models in the format <owner>/<username> or <owner>/<username>/<version>, HuggingFace URLs in the format huggingface.co/<owner>/<model-name>, CivitAI URLs in the format civitai.com/models/<id>[/<model-name>], or arbitrary .safetensors URLs from the Internet. For example, 'fofr/flux-pixar-cars'

- num_inference_steps

- Number of denoising steps. More steps can give more detailed images, but take longer.

- disable_safety_checker

- Disable safety checker for generated images.

Output Schema

Output

Example Execution Logs

2025-02-04 06:20:30.806 | DEBUG | fp8.lora_loading:apply_lora_to_model:574 - Extracting keys 2025-02-04 06:20:30.806 | DEBUG | fp8.lora_loading:apply_lora_to_model:581 - Keys extracted Applying LoRA: 0%| | 0/304 [00:00<?, ?it/s] Applying LoRA: 94%|█████████▍| 285/304 [00:00<00:00, 2848.68it/s] Applying LoRA: 100%|██████████| 304/304 [00:00<00:00, 2750.42it/s] 2025-02-04 06:20:30.917 | SUCCESS | fp8.lora_loading:unload_loras:564 - LoRAs unloaded in 0.11s free=28885966225408 Downloading weights 2025-02-04T06:20:30Z | INFO | [ Initiating ] chunk_size=150M dest=/tmp/tmp9z_wcc1a/weights url=https://replicate.delivery/xezq/bH3EqYKdqiK7BlzHakPqDj0tn8fzI9xlcYuvOZzhHho0C7FKA/trained_model.tar 2025-02-04T06:20:31Z | INFO | [ Complete ] dest=/tmp/tmp9z_wcc1a/weights size="172 MB" total_elapsed=0.951s url=https://replicate.delivery/xezq/bH3EqYKdqiK7BlzHakPqDj0tn8fzI9xlcYuvOZzhHho0C7FKA/trained_model.tar Downloaded weights in 0.97s free=28885793337344 Downloading weights 2025-02-04T06:20:31Z | INFO | [ Initiating ] chunk_size=150M dest=/tmp/tmpxgszx8wx/weights url=https://replicate.com/apolinario/flux-tarot-v1/_weights 2025-02-04T06:20:32Z | INFO | [ Redirect ] redirect_url=https://replicate.delivery/yhqm/P0f0U8kSZX3WPyee7NQHScd7S3IwjvC2tWKfiKG7nIOQdXONB/trained_model.tar url=https://replicate.com/apolinario/flux-tarot-v1/_weights 2025-02-04T06:20:32Z | INFO | [ Complete ] dest=/tmp/tmpxgszx8wx/weights size="172 MB" total_elapsed=0.740s url=https://replicate.com/apolinario/flux-tarot-v1/_weights Downloaded weights in 0.76s 2025-02-04 06:20:32.658 | INFO | fp8.lora_loading:convert_lora_weights:498 - Loading LoRA weights for /src/weights-cache/48e652e259b57e0e 2025-02-04 06:20:32.732 | INFO | fp8.lora_loading:convert_lora_weights:519 - LoRA weights loaded 2025-02-04 06:20:32.732 | DEBUG | fp8.lora_loading:apply_lora_to_model:574 - Extracting keys 2025-02-04 06:20:32.732 | DEBUG | fp8.lora_loading:apply_lora_to_model:581 - Keys extracted Applying LoRA: 0%| | 0/304 [00:00<?, ?it/s] Applying LoRA: 94%|█████████▍| 286/304 [00:00<00:00, 2859.56it/s] Applying LoRA: 100%|██████████| 304/304 [00:00<00:00, 2757.11it/s] 2025-02-04 06:20:32.843 | SUCCESS | fp8.lora_loading:load_lora:539 - LoRA applied in 0.19s 2025-02-04 06:20:32.843 | INFO | fp8.lora_loading:convert_lora_weights:498 - Loading LoRA weights for /src/weights-cache/3ab907c323b24c96 2025-02-04 06:20:32.974 | INFO | fp8.lora_loading:convert_lora_weights:519 - LoRA weights loaded 2025-02-04 06:20:32.974 | DEBUG | fp8.lora_loading:apply_lora_to_model:574 - Extracting keys 2025-02-04 06:20:32.975 | DEBUG | fp8.lora_loading:apply_lora_to_model:581 - Keys extracted Applying LoRA: 0%| | 0/304 [00:00<?, ?it/s] Applying LoRA: 94%|█████████▍| 286/304 [00:00<00:00, 2857.31it/s] Applying LoRA: 100%|██████████| 304/304 [00:00<00:00, 2754.68it/s] 2025-02-04 06:20:33.085 | SUCCESS | fp8.lora_loading:load_lora:539 - LoRA applied in 0.24s Using seed: 10868 0it [00:00, ?it/s] 1it [00:00, 7.23it/s] 2it [00:00, 5.07it/s] 3it [00:00, 4.61it/s] 4it [00:00, 4.43it/s] 5it [00:01, 4.32it/s] 6it [00:01, 4.26it/s] 7it [00:01, 4.23it/s] 8it [00:01, 4.21it/s] 9it [00:02, 4.19it/s] 10it [00:02, 4.17it/s] 11it [00:02, 4.17it/s] 12it [00:02, 4.17it/s] 13it [00:03, 4.17it/s] 14it [00:03, 4.16it/s] 15it [00:03, 4.15it/s] 16it [00:03, 4.16it/s] 17it [00:03, 4.16it/s] 18it [00:04, 4.16it/s] 19it [00:04, 4.16it/s] 20it [00:04, 4.16it/s] 21it [00:04, 4.15it/s] 22it [00:05, 4.15it/s] 23it [00:05, 4.15it/s] 24it [00:05, 4.16it/s] 25it [00:05, 4.16it/s] 26it [00:06, 4.15it/s] 27it [00:06, 4.15it/s] 28it [00:06, 4.15it/s] 28it [00:06, 4.22it/s] Total safe images: 1 out of 1

Version Details

- Version ID

c9e3e8f4858f6baa8a9df266ee7e9214596202bb41972f152f7b9a6ad433b3fe- Version Created

- February 4, 2025